Fibonacci node lifecycle with automatic AMI updates for EKS clusters

SOLERTIA ET LABORE

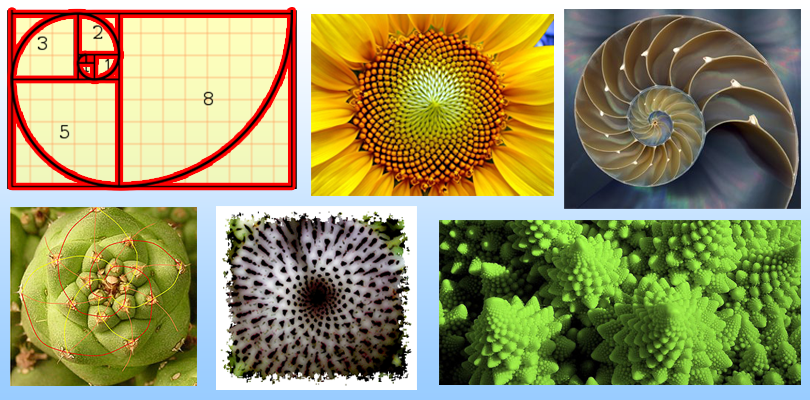

What is the Fibonnaci sequence ?

The Fibonacci sequence is a series of numbers in which each number is the sum of the two that precede it. Starting at 0 and 1, the sequence looks like this: 0, 1, 1, 2, 3, 5, 8, 13, 21, 34, and so on forever. The Fibonacci sequence can be described using a mathematical equation: Xn+2= Xn+1 + Xn.

Fibonacci patterns found in nature

The Fibonacci sequence appears in nature because it represents structures and sequences that model physical reality. We see it in the spiral patterns of certain flowers because it inherently models a form of spiral.

Fibonacci Retracement and Predicting Stock Prices

These Fibonacci ratios seem to play a role in the stock market, just as they do in nature. Technical traders attempt to use them to determine critical points where an asset's price momentum is likely to reverse. The best brokers for day traders can further aid investors trying to predict stock prices via Fibonacci retracements.

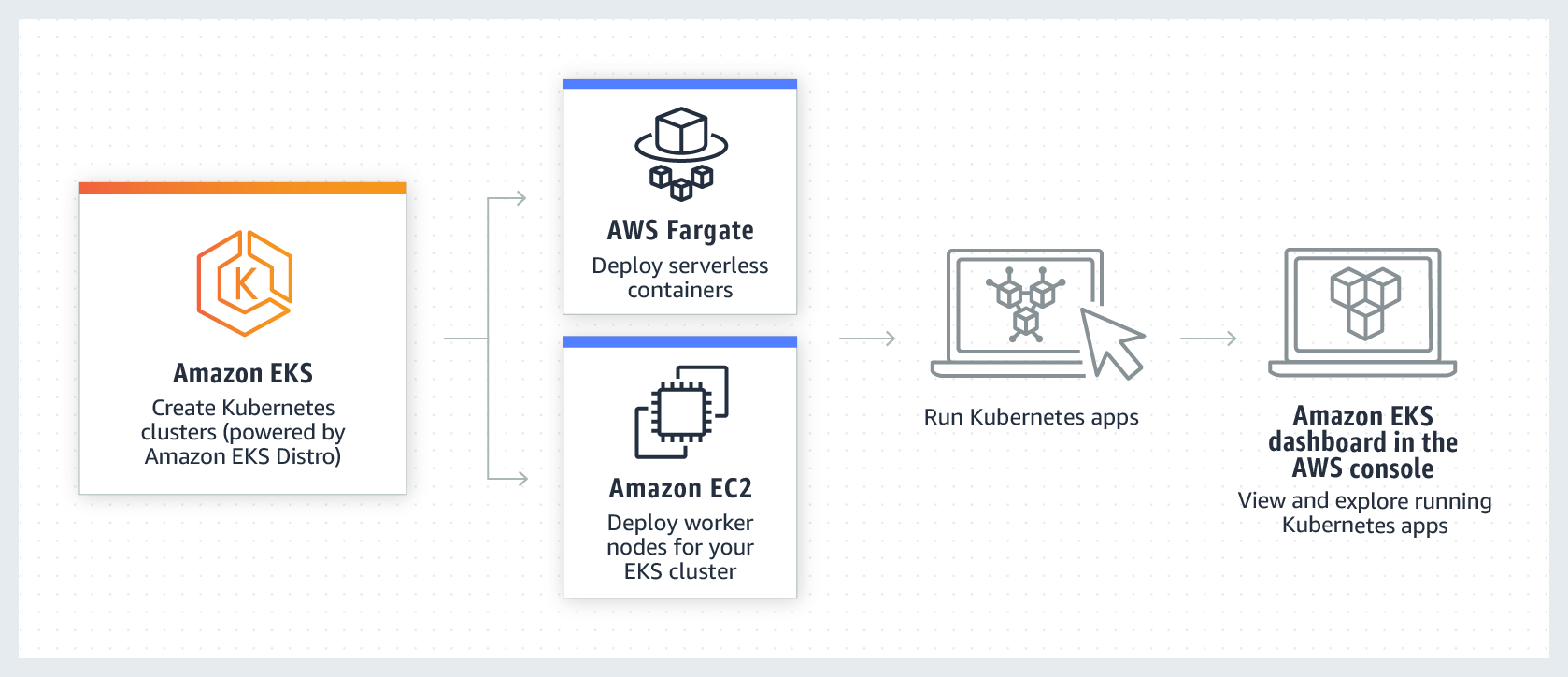

EKS Architecture

Amazon Elastic Kubernetes Service (Amazon EKS) is a managed container service to run and scale Kubernetes applications in the cloud or on-premises.

EKS architecture is designed to eliminate any single points of failure that may compromise the availability and durability of the Kubernetes control plane.

Control Plane

The Kubernetes control plane managed by EKS runs inside an EKS managed VPC. The EKS control plane comprises the Kubernetes API server nodes, etcd cluster. Kubernetes API server nodes that run components like the API server, scheduler, and kube-controller-manager run in an auto-scaling group.

EKS runs a minimum of two API server nodes in distinct Availability Zones (AZs) within in AWS region. Likewise, for durability, the etcd server nodes also run in an auto-scaling group that spans three AZs.

EKS runs a NAT Gateway in each AZ, and API servers and etcd servers run in a private subnet. This architecture ensures that an event in a single AZ doesn’t affect the EKS cluster's availability.

Data Plane

- To operate high-available and resilient applications, you need a highly-available and resilient data plane. An elastic data plane ensures that Kubernetes can scale and heal your applications automatically. A resilient data plane consists of two or more worker nodes, can grow and shrink with the workload, and automatically recover from failures.

Data Plane - Worker Node Pools (WNP)

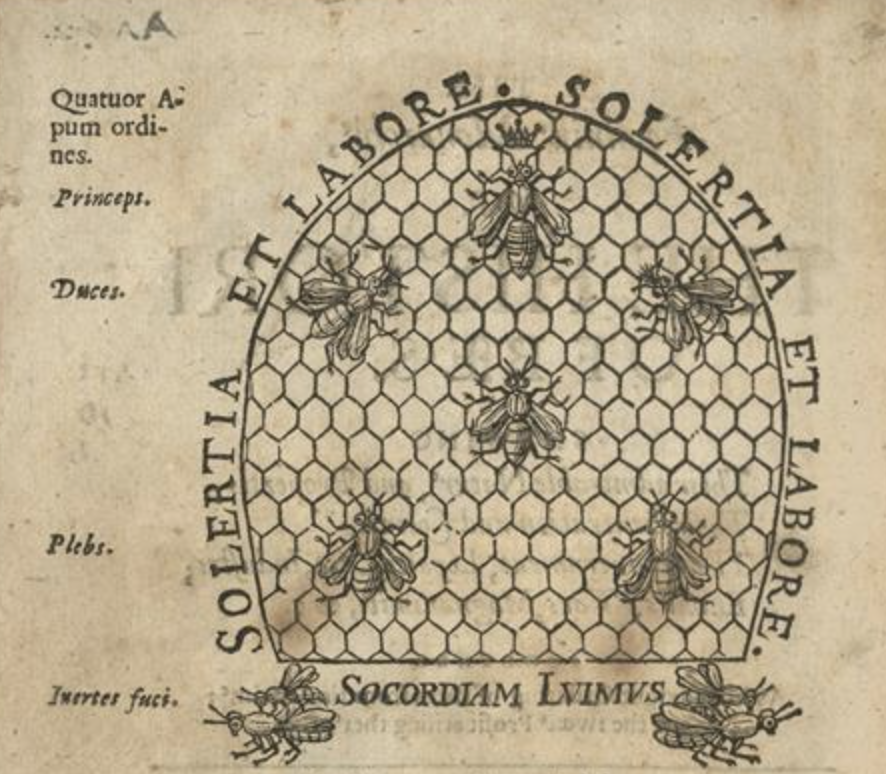

A best practice when distributing workloads is to isolate cluster operators from application services. For this PoC example we will be following the "shared pattern analogy" between nature and silicon-based technologies.

Inspired by Charles Butler’s Feminine monarchy (1609)

Charles Butler’s Feminine monarchy made an extraordinary contribution to the literature on apiculture when it first appeared in 1609. The book established in popular consciousness the relatively recent discovery (observed by Luis Mendez de Torres in 1586, and confirmed by Swammerdam’s microscopic dissections later in the seventeenth century) that colonies were presided over by a Queen bee rather than a male ruler. Butler described both contemporary methods of keeping bees in traditional domed skep hives and his own proposals for technical improvements.

The Feminine monarchy remained a practical guide for those wanting to keep bees for the next 250 years, until the development of hives with moveable combs changed the practice of beekeeping. The book is divided into ten chapters, covering the nature and properties of bees and their queen, the bee-garden and siting of hives, the construction of the hives themselves, the breeding of bees and drones, swarming and hiving, the work of bees, enemies of bees, how to feed bees, how to remove bees, and the fruit and profit of beekeeping.

The work draws heavily on Butler’s own experience of keeping bees. His observations of bee behaviour were meticulous, and even went so far as to attempt to represent the particular sounds made by a Queen bee who had yet to mate by use of musical notation. In the second edition of his book, he expanded this into a four-part madrigal! It was an innovative way of recording observational data, probably more interesting now as an example of seventeenth-century musical notation, than as a scientific study. It was reported that Artificial Intelligence (AI) is being used in hives to monitor and interpret the way bees buzz (see news story).

The four orders of bees:

- Princeps (CP)

- Duces (DP)

- Plebs (DP)

- Fuci (DP)

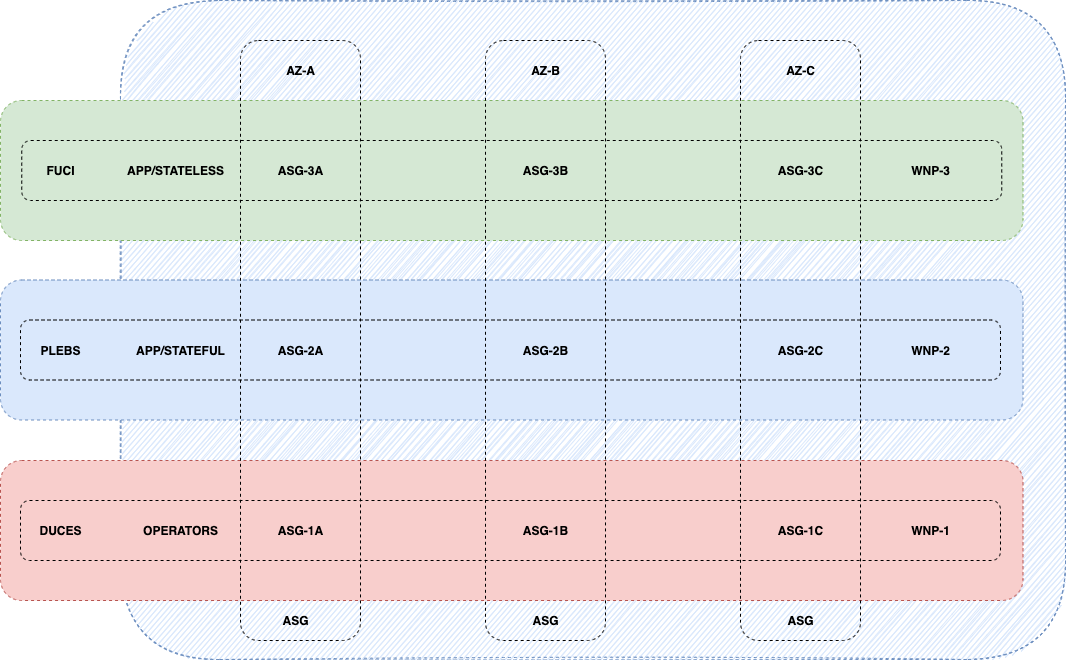

Data Plane Worker Node Pool (WNP) structure will be distributed as:

- DUCES - Reserved to Cluster Operators

- 3 Worker Node Groups 1 per each Az (A,B & C)

- Az-1A (WNP-1)

- Az-1B (WNP-1)

- Az-1C (WNP-1)

- PLEBS - Reserved to Stateful Application workloads

- 3 Worker Node Groups 1 per each Az (A,B & C)

- Az-2A (WNP-2)

- Az-2B (WNP-2)

- Az-2C (WNP-2)

- FUCI - Reserved to Stateless Application workloads

- 1 Worker Node Group

- Az-3ABC (WNP-3)

WNP - Architecture Diagram

Node Selectors for each Worker Node Pool (WNP) assigned to the Managed Worker Node Group (M-WNG)

You can constrain a Pod so that it can only run on particular set of Node(s). There are several ways to do this and the recommended approaches all use label selectors to facilitate the selection.

# APIS is the latin word used for bees

# WNP-1

nodeSelector:

apis: duces

# WNP-2

nodeSelector:

apis: plebs

# WNP-3

nodeSelector:

apis: fuci

EKS and Managed Worker Node Groups (M-WNG)

Amazon EKS managed node groups automate the provisioning and lifecycle management of nodes (Amazon EC2 instances) for Amazon EKS Kubernetes clusters. With Amazon EKS managed node groups, you don’t need to separately provision or register the Amazon EC2 instances that provide compute capacity to run your Kubernetes applications. You can create, automatically update, or terminate nodes for your cluster with a single operation. Node updates and terminations automatically drain nodes to ensure that your applications stay available.

Every managed node is provisioned as part of an Amazon EC2 Auto Scaling group that's managed for you by Amazon EKS. Every resource including the instances and Auto Scaling groups runs within your AWS account. Each node group runs across multiple Availability Zones that you define.

Maximum Instance Lifetime

Amazon EC2 Auto Scaling lets you safely and securely recycle instances in an Auto Scaling group (ASG) at a regular cadence. The Maximum Instance Lifetime parameter helps you ensure that instances are recycled before reaching the specified lifetime, giving you an automated way to adhere to your security, compliance, and performance requirements. You can either create a new ASG or update an existing one to include the Maximum Instance Lifetime value of your choice between seven and 365 days.

Summary

As seen by now, we have adopted a Worker Node Pool (WNP) strategy to isolate the workloads in 2 major groups within our cluster by following the "shared pattern analogy" between nature and silicon-based technology.

The WNP has been distributed in the same way as per the bee hierarchy, one important thing to highlight is the usage of Managed Worker Nodes Groups (M-WNG). To understand the difference between Managed and Self-Managed follow the AWS respective docs.

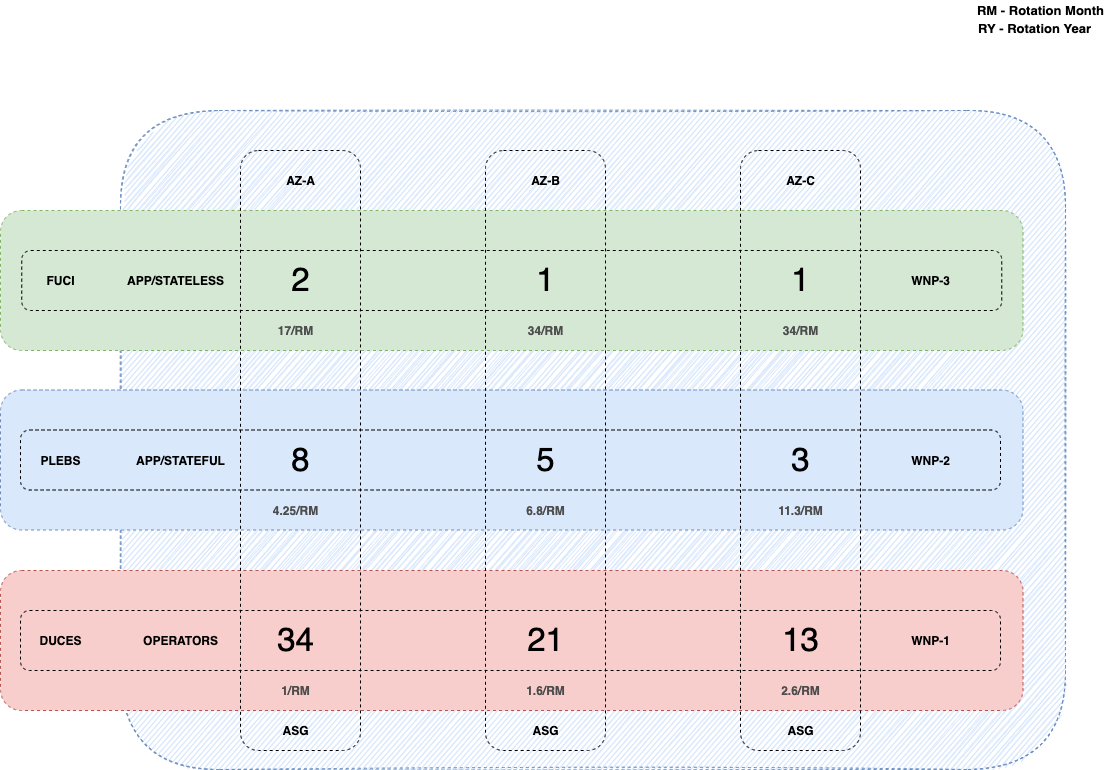

We will be taking advantage of the Maximum Instance Lifetime ASG feature and start assigning the Fibonacci sequence to each individual Worker Node Group as distributed per their respective Worker Node Pool.

The diagram is a graphical interpretation of the rotation weight assigned to each WNP/ASG block. WNP Rotation defined as:

FUCI- Az[A,B,C]: Daily rotation

PLEBS- Az-C: 3rd day rotation

- Az-B: 5th day rotation

- Az-A: 8th day rotation

DUCES- Az-C: 13th day rotation

- Az-B: 21st day rotation

- Az-A: 34th day rotation

The cluster worker nodes will be rotated based on their fibonacci weight in a cycle of 34 days. The FUCI WNP-3 given it's stateless workload doesn't require the deployment of 3 individual ASGs.

AMI Patching with Github Actions and eksctl example

One big advantage of the Managed Worker Nodes is that AWS will automatically handle the AMI rolling update.

eksctl is a simple CLI tool for creating and managing clusters on EKS - Amazon's managed Kubernetes service for EC2.

GitHub Actions makes it easy to automate all your software workflows, now with world-class CI/CD. Build, test, and deploy your code right from GitHub. Make code reviews, branch management, and issue triaging work the way you want.

For the AMI patching we will be implementing Github Actions schedules (cronjob) that will match the same fibonacci sequence for the node lifecycle this way we patch and cycle the nodes the same exact day.

Example: WNP-1C - AMI Update

# Worker Node Pool 1 - Availability Zone C (WNP-1C)

# AMI Patching schedule for each 13th day of the fibonacci cycle

name: WNP1-C / AMI Update

on:

schedule:

# Runs "At 00:00 on every 13th day-of-month." (see https://crontab.guru)

- cron: '*/13 * * * *'

jobs:

deploy:

name: WNP1-C / AMI Update

runs-on: ubuntu-latest

steps:

- name: Configure AWS Credentials

uses: aws-actions/configure-aws-credentials@v1

with:

aws-access-key-id: ${{ secrets.AWS_ACCESS_KEY_ID }}

aws-secret-access-key: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

aws-region: ${{ secrets.AWS_REGION }}

role-to-assume: ${{ secrets.AWS_ROLE_TO_ASSUME }}

role-duration-seconds: 3600

- name: Install eksctl

run: |

sudo apt-get install curl

curl --silent --location "https://github.com/weaveworks/eksctl/releases/latest/download/eksctl_$(uname -s)_amd64.tar.gz" | tar xz -C /tmp

sudo mv /tmp/eksctl /usr/local/bin

- name: Deploy eksctl upgrade

run: |

eksctl upgrade nodegroup ${{ secrets.WNP1_C }} --cluster ${{ secrets.CLUSTER_NAME }} --kubernetes-version=${{ secrets.EKS_VERSION }}